Why Deferring Everything Doesn't Always Work

Modern hardware is fast. Networks are fast. Browsers have gotten really good at loading pages.

So we’ve stopped thinking about resource loading entirely.

Since around 2015, defer has become the safe default for most first-party scripts: it doesn’t block HTML parsing, it preserves execution order of the scripts, and it runs after the document is parsed. Exactly how it should be. Is it though?

Here’s the thing: “just defer everything” can be a bad idea if you’re not paying attention to whether your app startup is network-bound or CPU-bound. You can accidentally create “dead time” when the CPU is waiting on network, then network waiting on CPU and delay the stuff users actually notice.

Quick refresher on script loading

If you already know this, skip ahead. Otherwise:

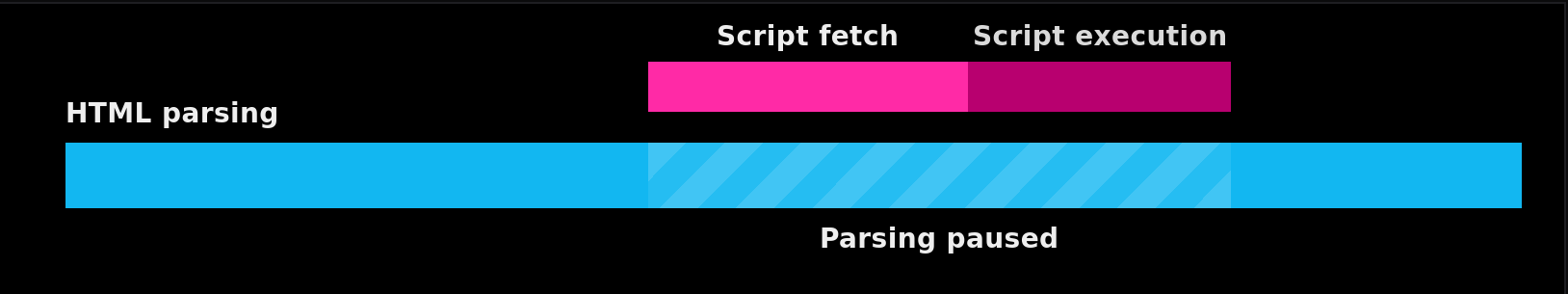

Classic scripts (blocking):

<script src="https://example.com/app.js"></script>

Parser hits this, pauses, fetches the script, executes it, then resumes parsing. This was the original de-facto behaviour in early days of web (and on later days as well on some sites).

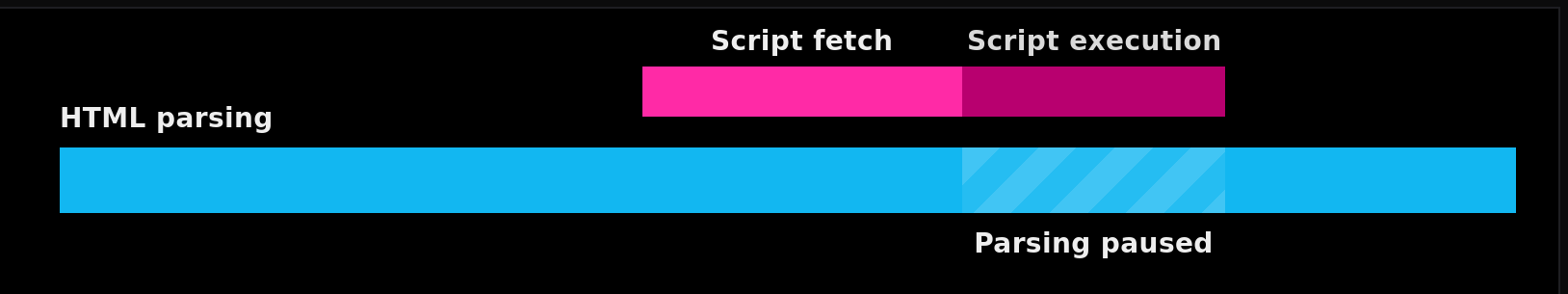

async scripts:

<script async src="https://example.com/analytics.js"></script>

Downloads in parallel with parsing, but executes immediately when ready which can interrupt parsing at an arbitrary point. Execution order is not guaranteed.

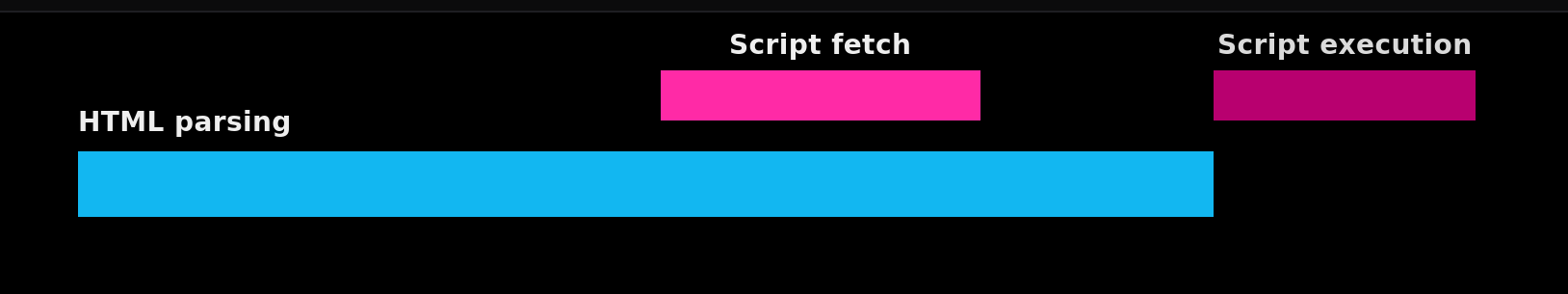

defer scripts:

<script defer src="https://example.com/vendor.js"></script>

<script defer src="https://example.com/app.js"></script>

Downloads in parallel, executes after the document is parsed, in document order. DOMContentLoaded waits for them.

Module scripts are deferred by default and are different from above but we will not discuss them here. 1

The problem: concurrent may not always mean fast

Most performance advice and our intuition tells us that:

More parallel downloads = faster page

But page load isn’t just downloading your website from server and showing it to the user (Maybe it is for some static pages without much dynamic content. But, let’s ignore that for the sake of argument) It’s a pipeline of

- Network time: fetching the data

- CPU time: parsing, compiling, executing JS; building DOM/CSSOM; layout/painting the screen

It’s easy to get into the habit of deferring everything (especially big scripts), but that means:

- Bandwidth split across many downloads, so nothing finishes early

- A bunch of scripts finishing close together

- Then a main-thread execution pile-up, because JS still executes largely serially

This is what patterns.dev calls dead time: CPU waiting while the network is busy, then the network idle while CPU chews through a mountain of work. 2

It is important to acknowledge that modern JS engines can parse/compile some stuff off the main thread. 3 But main-thread execution and DOM work are still the bottleneck when you hit a big “everything runs now” moment specially when there’s multiple of those scripts which want to run now.

So, from the above information, we can conclude that what we want is not concurrency, it’s pipelining:

- Download something

- Start processing it as soon as it’s ready

- While the next thing downloads

Not because concurrency is bad. Because bad scheduling is bad.

Here’s a simplified model that shows the difference:

Demo

Try this simulation:

- Start with Defer everything.

- Switch to Intentional scheduling.

- Increase Network load and Main-thread load to see how each strategy behaves under pressure.

You should notice the “defer everything” preset tends to bunch work into one big execution moment, while intentional sequencing pipelines network and execution better.

How to read this demo

- Each row is one resource. Earlier bars start sooner; wider bars take longer.

- Network load multiplies download time. Main-thread load multiplies execution time.

- Compare the two presets and watch where work bunches up.

FCP: -

LCP-ready: -

Interactive: -

Main-thread dead time: -

Parser

Network (download)

Main thread (execute)

Simplified model to focus on relative timing and batching behavior, not exact timing.

Does script placement still matter?

For blocking scripts: Yes, absolutely. We should put them at the end of <body> if you must use them. Otherwise user will be left hanging on a white screen (or black if they’re a dark mode user, no judgement) while waiting for the page to load

For defer/async scripts: Also, yes, but in a different way. The parser won’t stop so it matters less. But, based on the position:

- Discovery : when browser encounters a script, it can start the fetch process.

- Competing resources : it impacts the bandwidth for other resources downloading at that moment (CSS, fonts, hero image)

Browsers run a preload scanner that can discover some resources early even while the main parser is blocked, but only if the URLs exist in the markup. If you inject scripts via JS dynamically, the preload scanner can’t see them ahead of time. 4

Based on this, we can say that script placement is an important tool for scheduling resource discovery.

Real scenario

Let’s consider a generic landing page with:

- HTML content + hero image (likely the LCP element)

- CSS that makes the initial view meaningful (needed for FCP)

- Three scripts:

widget,app,analytics

Default “performance checklist” approach or just the default approach when someone’s not thinking about performance at all:

- Defer everything

- Preload everything

- Hope the browser figures it out (and it may do just that for many scenarios)

But if you preload too much and defer blindly, you create the worst possible bottleneck and inefficient timings: critical CSS + LCP image + heavy JS all fighting for the same early bandwidth. Then JS executes continuously right when the user wants the page to feel responsive.

Also: CSS is render-blocking by default, so delaying critical CSS delays FCP. 5

So what, should we wind back time and inline everything?

Not entirely. But also kind of yes, in a strategic way.

A better approach

1. Inline just enough CSS for a meaningful FCP

Inlining critical CSS means users see meaningful content faster, reducing render-blocking resource loads, especially on high-latency networks. 6 After that, you can load the rest normally (or async if you know what you’re doing).

2. Prioritize the LCP image on purpose

If the hero / banner image is likely LCP, give it priority.The fetchpriority="high" attribute exists specifically for scenarios like this. 7

3. Don’t load all JS just because you can

widgetrequired for first interaction? Load early (defer) so that user can interact with the page earliest.appnot needed until later? Load after first paint or on interaction.analyticsoptional? Push it out of the critical path (async + delayed).

Not everything belongs in the same execution path. 2

4. Work with the preload scanner

If a resource is discoverable in HTML, the browser’s preload scanner can find it early. If you inject resources late via JS, you delay them. But, make sure you do it with intent, because like we discussed earlier, it may not always be a good idea to preload all resources. 4

The browser is good. And it keeps getting better, but it will never be able to read the our mind. We should annotate and place things appropriately and not assume concurrent is always faster.

References

MDN :

<script>element (async/defer behavior): https://developer.mozilla.org/en-US/docs/Web/HTML/Element/script ↩︎patterns.dev : Optimize your loading sequence: https://www.patterns.dev/vanilla/loading-sequence/ ↩︎ ↩︎

V8 : Background compilation: https://v8.dev/blog/background-compilation ↩︎

web.dev : Preload scanner: https://web.dev/articles/preload-scanner ↩︎ ↩︎

Chrome DevTools : Render-blocking resources: https://developer.chrome.com/docs/performance/insights/render-blocking ↩︎

web.dev : Extract critical CSS: https://web.dev/articles/extract-critical-css ↩︎

web.dev : Fetch Priority: https://web.dev/articles/fetch-priority ↩︎